Initial Placements

My war on fun began with analyzing Chinese Mahjong using probability theory. Today, I turn my slings and arrows on another classic: Settlers of Catan.

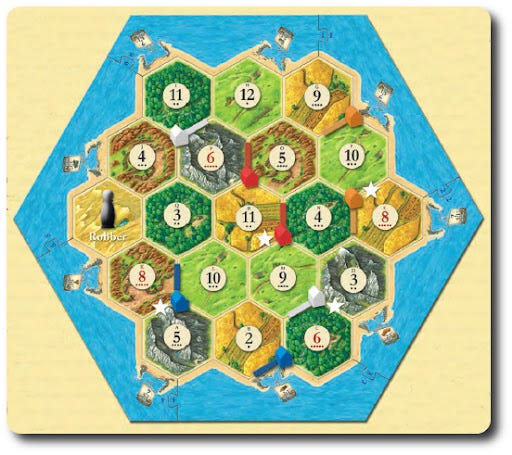

I will be assuming here that you’re already familiar with the rules of Catan, but here’s an example board for reference:

Catan is an incomplete knowledge game, meaning that the players have access to different amounts of information. One thing nobody knows is what the next roll of the dice will bring. In a game like chess, it’s possible to guarantee an outcome if you calculate all the relevant possibilities. In a game like Catan, the best you can do is maximize probabilities.

The little dots at the bottom of the numbers represent the relative likelihood of the next throw of the dice yielding that number. Some are more likely because there are more ways to make some rather than others. For instance: there’s only one way to make a 2: 1 on each die. But there’s two ways to make a three — a 2 on die number 1 and a 1 on die number 2 and vice versa.

Since there are 36 total possible dice rolls (6*6) the resulting probabilities are as follows:

These correspond to the dots (“pips”) at the bottom of the number tiles. The game is generally won by the player with the most resources, and a lot will be determined by the initial placements. Counting up the “pips” on the bottom of the tiles is a good rule of thumb for determining the value of an intersection.

But there are two other factors to consider: the ability to build more settlements and the exchange value of resources.

You can build a settlement without ore (although you can’t win without it) so if forced to decide on a resource to forgo, ore is your best bet. You can build towards an ore intersection later. Prioritizing wood and brick can also be an effective strategy because roads claim territory, and the more territory you claim the more options you have.

The other issue is the exchange value of a resource. By default, you can trade any resource at 4:1 with the bank. So, theoretically, you could compensate for a low probability of obtaining one resource with a very high probability of another. But, to balance the probabilities, you’d need at least a 4x greater chance of obtaining the first resource to make the tradeoff worth it. So, for instance, electing for a “2” brick tile instead of “3” can be compensated for by obtaining a 5 wheat tile instead of a 2.

Usually, however, this is a poor strategy unless you can get a port. A 2:1 wheat port will compensate for a 1-pip reduction in the odds of another resource so long as you get at least a 2-pip bump on wheat. So going from a 3 to a 5 on wheat would be worth forgoing a 2 on brick.

So, barring an extreme advantage (like a 2:1 port on wheat and a 6 or 8 on the hex) you should generally opt for a balanced approach — attempting to obtain a moderate chance of each resource rather than a high likelihood of any particular one. In the beginning of the game, ore is the exception, but keep in mind that you’ll need it eventually.

You can even figure out the expected value of a tile by simply multiplying these odds by 501:

A similar logic applies to building settlements as opposed to cities. A city will double the value of an intersection, so if forced to choose between a city and a settlement, you should simply compare the probability values of the intersections, including their exchange values, taking into account the ports you have or could have.

The Robber

Everybody loves that little guy. Whenever you roll a 7 it’s stealin time!

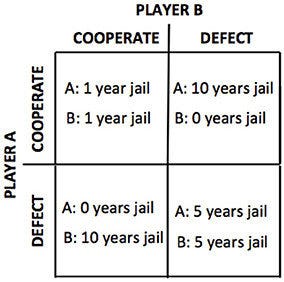

But, then again, maybe you shouldn’t be so eager. One of the earliest adventures in game theory began with the study of the Prisoner’s Dilemma. Imagine that you have two criminals locked in separate rooms and under interrogation. The police offer both of them the opportunity to testify against the other in exchange for a lighter sentence:

You can choose to either “cooperate” or “defect.” The interesting thing about the Prisoner’s Dilemma is that it illustrates how individual and collective interest can come apart. Notice that no matter what the other player does, it is always better for you to defect. If they cooperate and you defect, you get 0 instead of 1 years in jail. If they defect and you defect you get 5 years in jail as opposed to 10 years in jail. But the lowest total number of years in jail occurs when both prisoners cooperate (2 years in jail as opposed to 10).

To minimize the number of years in jail for everybody, you’d want some way to enforce cooperation (so long as it wasn’t worse than the extra 8 years in jail it’s avoiding). So you tend to find a big difference in this game when played across multiple iterations. If you know the other player will find out about what you did, and then you’ll have to play with them again, there’s a much greater incentive to cooperate.

Under certain conditions, computer simulations and real world studies have determined that the best strategy in “Iterated Prisoner’s Dilemma” is known as Tit For Tat — you do whatever the other player previously did. If they defected you defect. If they cooperated you cooperate. Simple.

This can be a highly effective strategy for using the robber. If you don’t rob others, they’re less likely to rob you. Conversely, if you do rob them in response to them robbing you, they’re less likely to do so in the future.

There’s a well known problem with the TFT strategy where two players who adopt it can become locked in a kind of death spiral of endless retaliation. So an even more effective strategy tends to be somewhat forgiving, and will decline to retaliate once in a while to see if the other side responds in kind.

This is how I typically use the robber, and I have found it to be a good balance. Once you acquire a reputation for defending yourself aggressively, but never being aggressive, other players are less likely to want to rob you.

Of course, there are some situations where these assumptions break down. Once you get towards the end of the game, the game theory begins to look much more like the single Prisoner’s Dilemma rather than the iterated one. If somebody is about to win, you don’t have too much reason to worry about retaliation, and you might as well go for it.

Rolling a 7

When you roll a 7, you don’t just move the robber, you lose half of your cards if you hold more than 7. Sometimes, however, you might want to risk it rather than spending your cards on your turn because you’re saving up for something big. This risk will go up the more players there are (because each one has an opportunity to roll a 7, including you, before you can build again). This chart gives you the odds of that happening, depending on the number2 of players:

The formula for calculating this is:

Where O is the odds of a 7 occurring on each role of the dice (see above) and N is the number of players. This is logically equivalent to the odds that not every roll of the dice in the next round isn’t a 7, which is also logically equivalent to the odds that at least one of them is a 7.

Trading

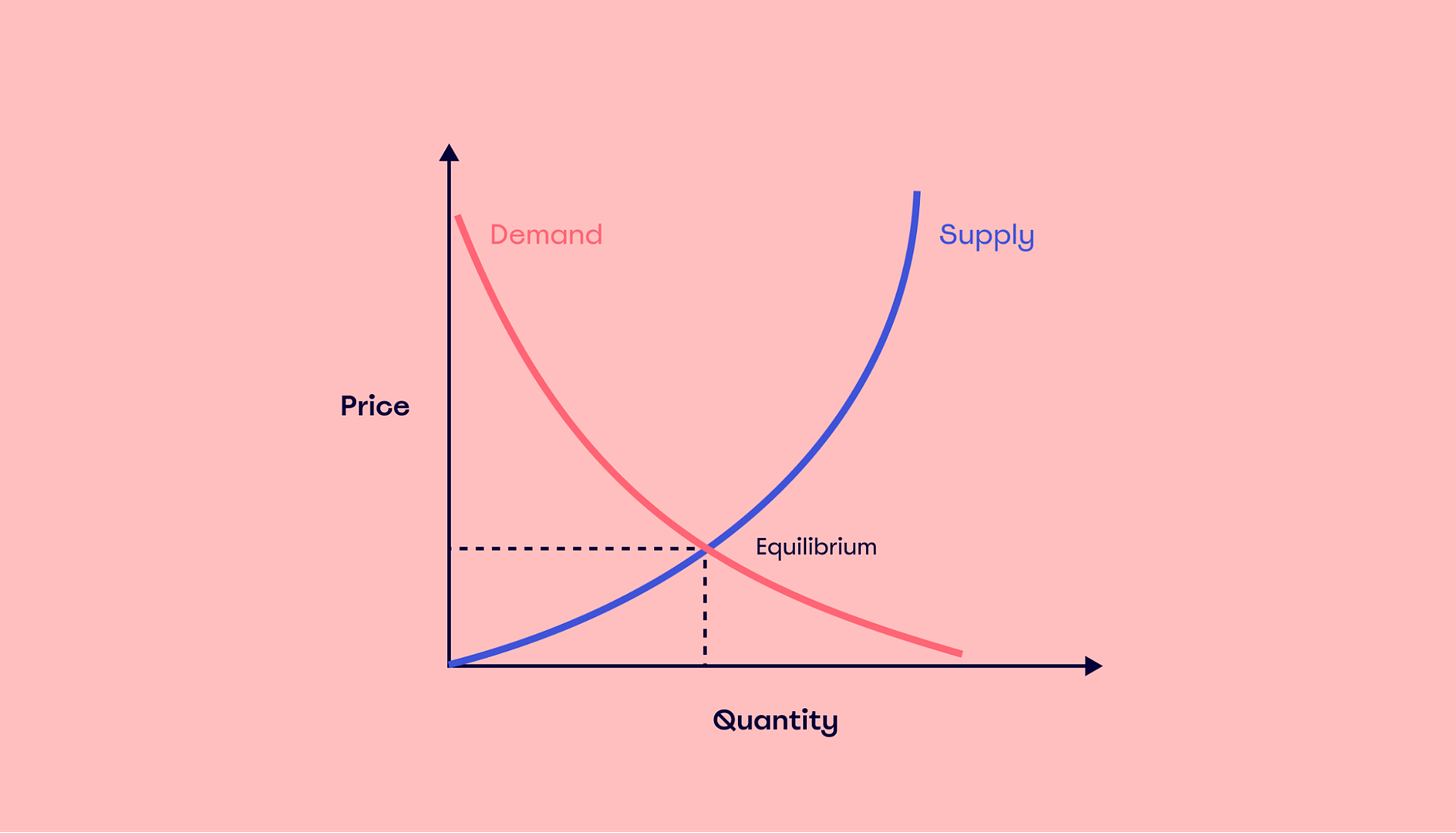

Trading in Catan is very much like a simple consumer market. It follows the laws of supply and demand:

Put simply, when there is less of a resource, other people will want to give you more for it and vice versa. So, it can be an effective strategy to corner the market on a particular resource. If you have all the wheat, everyone is going to want some of it.

But, it’s also the case that some resources have greater intrinsic value. People will generally need more brick and wood because a winning player will usually need a large number of roads. Whereas, they will only need wheat when building a settlement or city. A similar calculation exists for ore.

So, it makes sense to have a default assumption that the demand for brick and wood will be higher. If you can corner a large portion of that market, everyone is going to want to trade with you. This can definitely help compensate for shortcomings in your own resource production.

But, players are also not always rational agents. Sometimes, they won’t trade with you even if it’s to their benefit because you’re getting closer to winning, or because you placed the robber on them (see above). Economists have traditionally thought of the average consumer as a completely dispassionate rational agent who does whatever is best for them.

The economist Daniel Kahneman even won the Nobel Prize for showing that people sometimes use timesaving mental shortcuts which lead them to conclusions that produce less than the best results. It’s always struck me as somewhat funny (and a great example of Ivory Tower Syndrome) that somebody actually won the Nobel for proving that the typical market participant is not a mindless bean-counting robot.

Fairness is another important factor, and some studies (again, rather unnecessarily I would think) have shown that people will reject unfair offers to punish the offeror even if it results in worse outcomes for themselves.

Now see, wasn’t that fun?

A typical game of Catan with 4 players has about 50 dice rolls.

There is a 5-6 player expansion for Catan, but it contains a special rule allowing each player to build on everybody’s turn, so the risk in that case would simply be the odds of a 7 coming up on the next die roll (see above).